Immanentizing the bugocalypse

Asymmetric vibesploitation dept.

25 May 2026

Many feeds have been filled as of late with breathless headlines about the shocking number of security vulnerabilities found using post-2017 AI models. There are several things to note, not least that reporting tends to omit the five-figure price tag of unearthing a single bug.

Low-hanging fruit is hardly in short supply in today's lousy software ecosystem: Mozilla's codebase is over thirty years old and has been rejiggered three or four times. While only one bug was found in Curl, it is atypical in that it is among the most hardened programs in existence (also it only has one job, which makes it less complicated to verify correct behaviour).

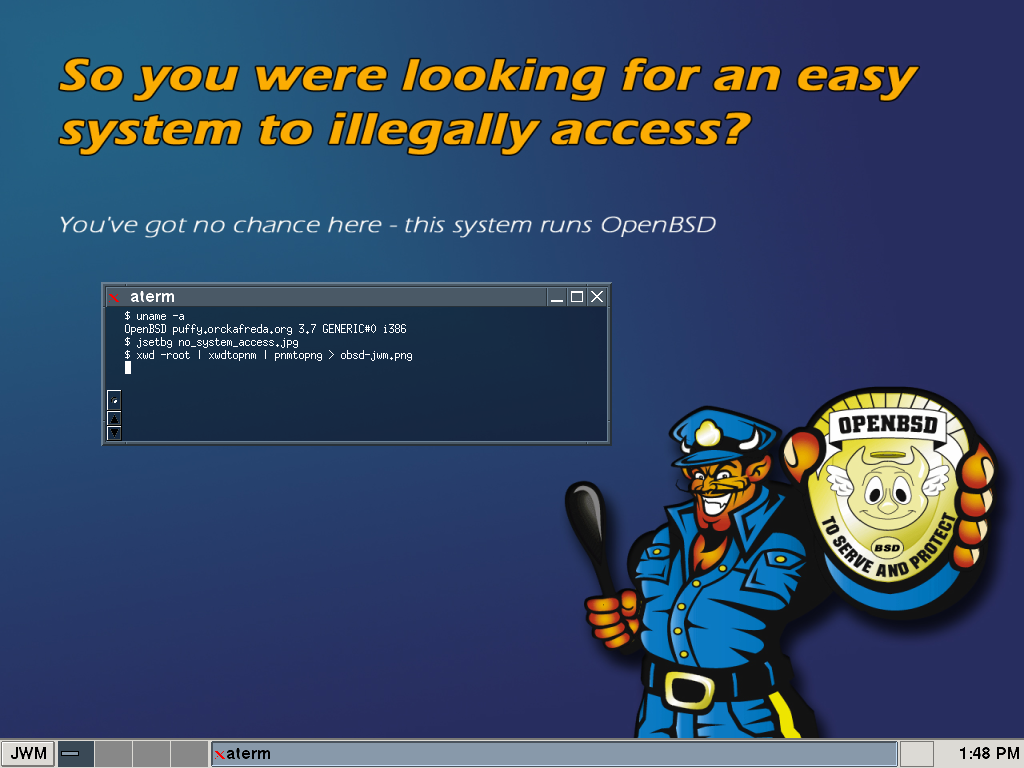

A more fitting subject to get into might be the OpenBSD operating system, which has been security-conscious since its inception and discloses the full details of patched exploits to ensure transparency.

Though, take heed that this discussion might get a tad technical, since it assumes familiarity with an ancient internet protocol known as Electronic Mail.

2020

For context, consider an example from before vibe-coding

became a word, the January vulnerability discovered by

Qualys

in the OpenBSD mail server. This was caused by a function returning 1 where it ought to return zero while verifying that an E-mail address was valid, enabling an attacker to pretend to deliver mail from a local user named e.g. ;command; – this would remotely execute command

on the server itself.

Pretty grody considering this is what BSD used to be for: it was a system you could set up on a spare computer, plug into the office network and maybe log in to to check for updates every couple of months.

To be fair, writing the code in question may have been confusing since it was dealing with obscure functionality — the ability to deliver mail to users on the same server without typing out the part of the address after the @t-sign — which is something that hardly anyone ever takes advantage of outside of university networks.

2026

Hot off the press, we have a couple of OpenBSD bugs discovered last month by RandomDhiraj@SomeLab, aided by the Codex 5.2 model. In this case, the spam filter turned out to have a buffer overflow vulnerability — basically a miscount of where text gets updated, which allows an attacker to poke at regions of computer memory he shouldn't be able to access.

The model's internal reasoning

exposition is worth quoting:

…a huge fraction of low-level C bugs show up in the details of how code handles partial writes, errors, and the bookkeeping around pointers and remaining lengths. Mentally, I'm scanning for patterns like "callwrite(), then advance a pointer and subtract the number of bytes written" — the classic shape iscnt = write(...); len -= cnt; p += cnt;

Note that, unlike a proper code–verification tool, this seems to lean on programmer conventions for what exactly a running tally is called (the logic flow remains the same wether it's named count, cnt or tallywhack).

Still, it's fairly impressive for one person's weekend project.

Now recall the 2020 mail-server bug, which was caused by a subtle logic error that instituted Opposite Day so that mail addresses were treated as correct when they shouldn't have been. LLM code-generation tools will have been trained on source code that includes mistakes of this kind, and will merrily replicate them (in fact, the model will get a better score when it does, since it matches the training data). Logic bugs such as these are especially nasty in that they tend to hide from cursory inspection: catching them requires working through every permutation, possibly using a pencil.

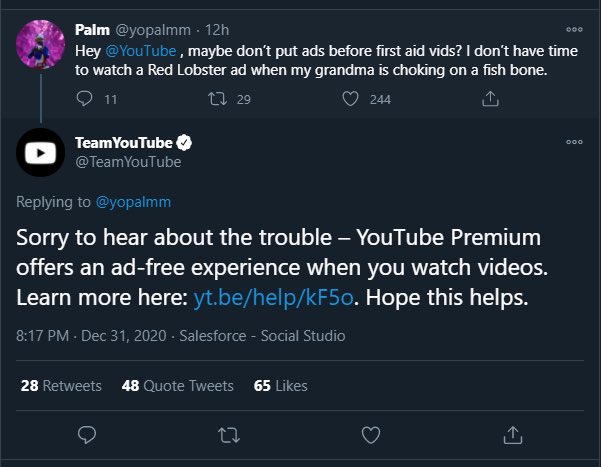

As such, LLM-assisted programming introduces an asymmetry: it's best suited to probing for vulnerabilities, because an exploit only has to work once, whereas an application isn't supposed to be crashing randomly.

When vibe-apps mechanically repeat the mistakes of the past, vibesploits can treat those missteps as a database of patterns to be on the lookout for.

And while management has tended to regard bug-hunting as unproductive,

this could change now that it has become AI-Powered™.

(Perhaps automated code analysis might also finally become the priority it should have been twenty years ago; there's always hope.)

The upshot

Traditionally, the reckoning about budgets has been that 90 percent of the cost of software is maintenance.

This state of affairs may now be upended, which could potentially have dramatic effects on the development process, though not in a cost-saving direction. If vulnerabilities become easier to discover, shifting dev burden to before the launch date so that e.g. only 80% is maintenance, that would double the up-front cost of creating a new software suite.

None of this indicates that programming is about to become an obsolete skill or that the future of development will be

any less concerned with technical details. Best of luck finding new hires who have any concept of a file-system.

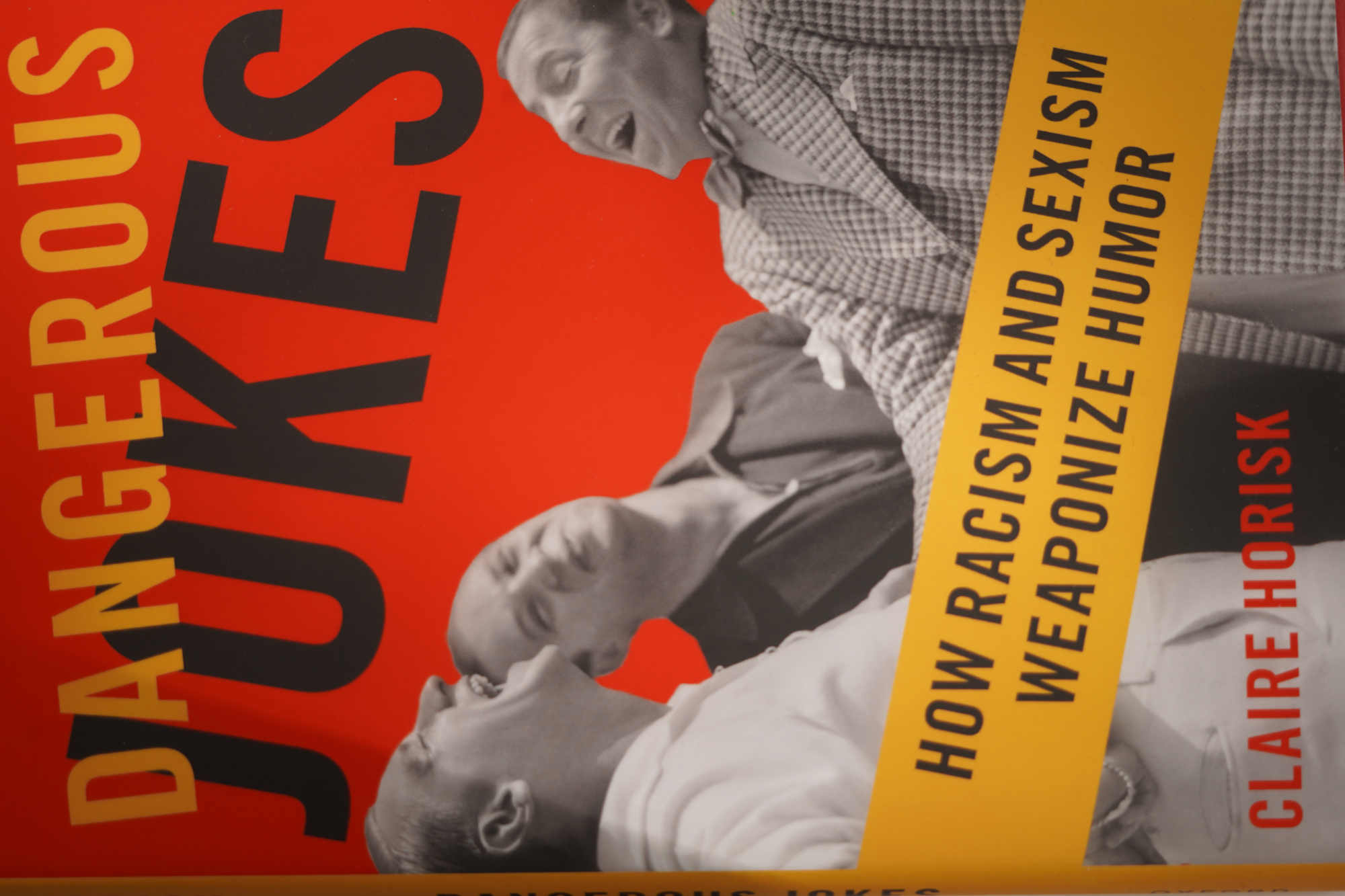

Bookpile: Dangerous Jokes

Lawful Unfunny dept.

19 May 2026

I only feel this appalling sadness. Somewhere, in their upbringing, they were shielded against the total facts of our existence. They were only taught to look one way when many ways exist.

—Bukowski

Be warned that this is a so-called crossover

book, meaning it's an academic treatise that was retrofitted to have slightly broader appeal. In this case, the concession to pop-science is made by way of a three-page afterword of allegedly practical advice which won't tell anyone anything they didn't already know, and the 161 pages preceding it are distinctly unfunny.

Offensive jokes are not quoted directly, thereby ensuring that the discussion will be remote from the daily conversations which are supposed to be the book's primary subject (this is not a theory of stand-up comedy, thankfully).

Instead, Horisk mostly busies herself with rearranging the deck chairs of Seventies theory and taking ethical advice from the NYT while the West is merrily amusing itself to death.

It's hard to believe that this book was published three whole years after the writing was spraypainted on the wall in 2021, though that is probably a sign of just how slowly her much-vaunted common ground

shifts.

Much like how the concept of a carbon footprint

shunted the blame onto the individual consumer for not Buying Green™ we here seem to be just one step from the introduction of bigotry offsets.

As a good consumer solipsist, Horisk can only see the personal guilt that might be incurred by a single listener in the audience of a bigoted joke, neglecting that jokes are usually told in a crowd. Questions of how they might bolster in-group cohesion are largely relegated to footnotes, and so we are left with an etiquette manual for fine dining at a time when it might have been more prudent to point to where the lifeboats are.

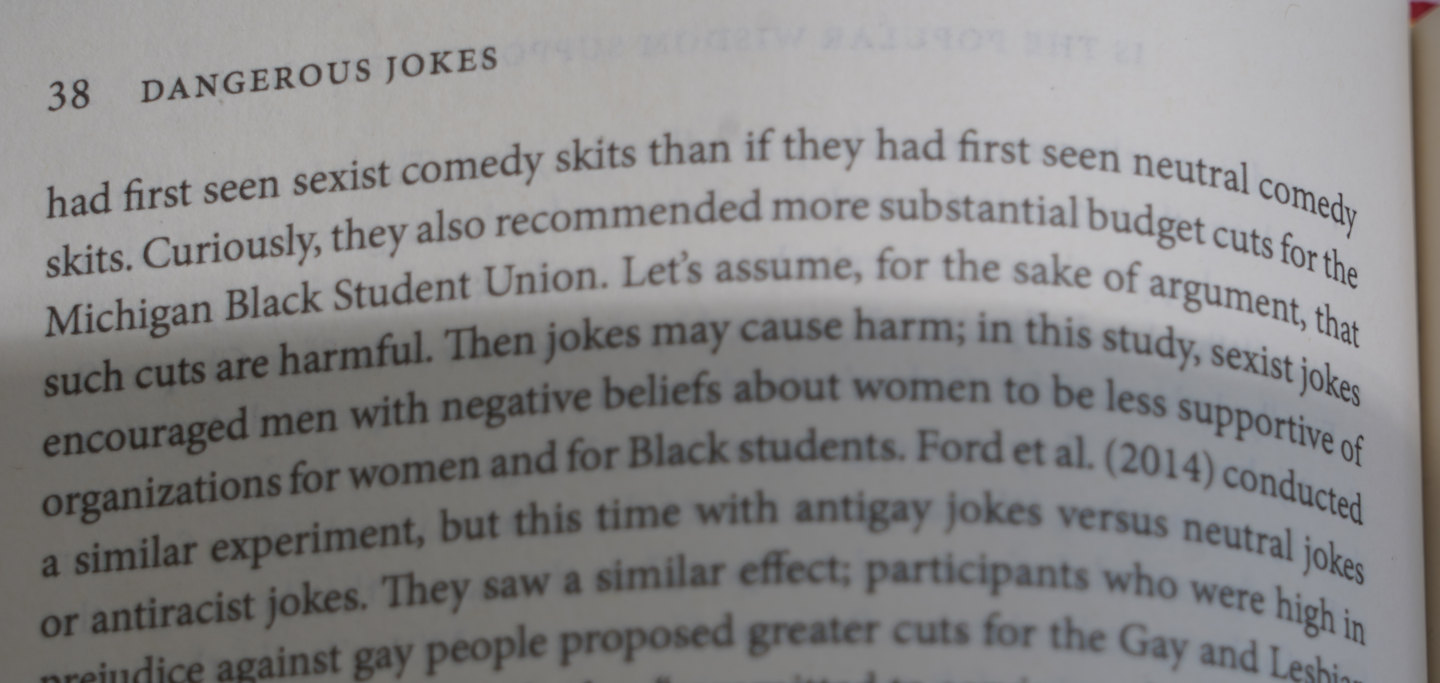

The danger mentioned in the title seems to be chiefly the risk of getting sued. Court cases referenced include Moore v. Kuka Welding Systems 1999, Henderson v. Irving Materials Inc. 2004, Witt v. Roadway Express 1998, Smith v. Northwest Financial Acceptance, Inc. 1997, Baskerville v. Culligan International Co. 1995, Oncale v. Sundowner Offshore Services, Inc. 1998 (in which Scalia clarified his view on smacking buttocks: it's a sometimes-food) and Swinton v. Potomac Corporation 2001. Of particular interest is Swinton, where the jokester habitually carried a handgun and so could not be presumed to simply mean things in a non-intimidating way: this might suggest that humour depends in part on a temporary potential for self-abasement, which evaporates if there is a power imbalance. (That is, comedy requires going out on a limb.)

Engaging with someone suffering from rich kid syndrome,

seemingly unable to even imagine that they could ever be brought low, has a uniquely annoying character to it.

Paraphrasing Sartre, we might say that the wannabe brunchlord asserts his right to play, to soar in the realm of metaphor, far from the madding crowd with its cries of bread and rights abuses, while saddling his (unambitious) opponent with the duty to speak seriously; thereby branding him as someone dour who doesn't enjoy a good joke now and again and might not even be capable of advanced thinkery such as daydreams about how he's going to be rich tomorrow.

(The ethno-supremacist meanwhile attains his easy smugness simply by claiming membership in a dominant ethnic group, a simple way out with an obvious appeal to the sort of people who do not like to think too closely about much of anything. When cries of elitist

are so common, it's perhaps an accusation that someone isn't playing the usual joking-game, or plays by different rules.)

Throughout this book, the watchword is politeness über alles, to play the game and never step outside the lines. The problem with this is that Be permissive with what you accept, and conservative in what you transmit

may be a good principle for E-mail servers, but leads to squalor in real life; the conversational equivalent of being swamped with junk mail because it would be rude to stuff the postage-paid return envelopes.

Where did this whole fixation on inoffensiveness come from? A key insight had to be furnished by a PhD student: the dinner-table peace is all about not upsetting peepaw (which brings to mind the anecdote about someone's grandma who, upon hearing the title of the book Rethinking Economics, blurted out Is that even allowed?

) In this vein, all-ages restrictions seem to be more for the benefit of the old than the young, so it should be no surprise that chasing the broadest possible target audience has resulted in a calcification of pop culture.

Grandparents of course are the product of previous cultural evolution; so a fixation on politeness emphasizes the steady-state, the outcome rather than the process. As it turns out, the most interesting aspects of humour may be instead its dynamic potential, something that is touched on briefly:

This indicates a semantic connection between thoughts of various different out-groups, minorities etc., but the point is not developed further.

The so-called practical advice

for how to respond to bigoted jokes has clearly not been field-tested, serving up only the claudicant observation that there is no way to confront a bigot without risking your own likeability. This does identify an asymmetry to conversations that may explain why there are so many crybullies (tough guys who can dish it out but not take it): when there is a social cost to calling their bluff, but practically none to bluffing in the first place, most people simply won't bother and may in fact come to resent the few do-gooders who think they have the right to upset the social order.

Claire Horisk, Dangerous Jokes

204 pages, Oxford University Press 2024.

TASTING NOTES: Subpar paper quality for a hardcover edition.

Score: 46°

iSafety on the iInfo 'igh Seas

perverse incentives dept.

21 Apr 2026

Cars are the ultimate consumer good, yet oddly enough the company that attracts consumers like no other has trouble wrapping its design collective's head around it. After a decade of floundering, Apple (Computers) finally gave up on their highly-anticipated collaboration with VW (das Auto) to create a flying Apfelwagen or somesuch which, most importantly of all, had iPhone iNtegratiOn to save you from having to keep your eyes on th'i Tarmac. While the enthusiast press seems mostly to be blaming the failure of Project iTanic on the difficulty curve iNvolved in creating any kind of autonomous road vehicle, in fact the first warning signs became apparent much earlier.

At one point, the otherwise sunnily-disposed Californians had to consider some less–sunny-day scenarios —, what should happen in the event of a crash? True to form, Apple's engineers did not stoop to merely tinkering with the problem piecemæl but instead challenged the very paradigm of auto accidents: why don't we just make it so that none of the other cars on the road will ever collide with ours?

It really exposed the consumer solipsism that was always there, the same mindset that results in shaming text messages from non-Apple devices by painting their speech bubbles green (the preferred fix was to simply bully everyone into buying an iBauble; truly the free market at work).

In this case, though, it couldn't be smoothed-over like when customers were unaware what 2G

or bandwidth

might be.

Now,  this kind of engineering-by-wishcasting doesn't necessarily lead to absurd outcomes, as long as you're simply taking a well-understood commodity and making it more convenient.

It is however emblematic of a school of thought that just goes ahead and assumes that everyone else will play nice.

As often as not, the result is that the few who do bother to go along with it wind up footing the bill, or worse: sticking to the speed limit might actually make you a road hazard if you're the only one who does so.

this kind of engineering-by-wishcasting doesn't necessarily lead to absurd outcomes, as long as you're simply taking a well-understood commodity and making it more convenient.

It is however emblematic of a school of thought that just goes ahead and assumes that everyone else will play nice.

As often as not, the result is that the few who do bother to go along with it wind up footing the bill, or worse: sticking to the speed limit might actually make you a road hazard if you're the only one who does so.

Nowhere is this attitude more prevalent than in legislation, which often seems to presume uniform compliance and nearly always fails to predict the knock-on effects and auxiliary markets that it might create.

Age verification is a typical case of overly literal thinking about the internet: you get carded when you go to a club, so it makes sense that punters should get cyber-carded before they are 200 Okayed into the cyber-club. However, the lack of friction means that Online is less like a busy downtown and more like scattered island-states: if the next port over offers cheaper booze, you can simply lift anchor and be there before happy hour.

As with the astroturfed pushback to 5G networks, there seems to be a concerted effort to go back to a Seventies model of gatekeeping that appears more comforting at a glance to the fax-machine generation. The whole rigmarole also operates on the antiquated assumption that the internet is mostly a frivolous distraction and not an inexhaustable fount of knowledge — when people have to find answers or phone numbers in an emergency, they're going to reach for the sites they're familiar with and the apps they already have on their phone, and any kind of delay or unexpected malfunction could literally cost lives.

If by some miracle verification was made completely safe and reliable, it would remain a liability.

Even though confirmation of 「above/below 18」 might be only a single bit, that extra information can be the vital dot in the bitmap that unmasks the user: if you can estimate when someone turned eighteen, you can carry out targeted phishing based on their star sign, and then you've got a customer

for life.

When the internet is regulated with no concern for how network traffic actually looks like, the results are similar to the designers of the Apfelwagen hoping to sweep traffic accidents under the rug altogether, or efforts to ban the sale of alcohol in a place that has ready access to the sea. All the while, the excuses for carrying out this exercise in futility are more two-faced than ever, indulging in the familiar motte-and-bailey tactic: vulnerable women must be saved from self-employment within adult entertainment, children must be shielded from deviant sexualities and perhaps… corrected, even corporally so.

This is all especially problematic in the context of mobile devices since it provides yet another attack surface, perhaps common to most phones in an entire country, enabling the tracking of travel patterns. Previous experiments have shown that simply charting someone's movements throughout a day allows anyone to estimate with a fair degree of precision what they do (the people making guesses in the study were laymen, not experts). Hence, simple surveillance alone makes it easy to estimate wether someone is observant, a habitual pipe-smoker, frequents radical bookstores, or works at a clinic that performs abortions.

RGB gamerfeels

clear as liquid crystal dept.

15 Apr 2026

Blaming the screens for all of society's ills is currently in vogue. When engaged in this, most among the pundits are however usually talking about the new fondleslabs, tablets and smartphones, rather than the desktop monitors they themselves were weaned on. The blind spot is rather telling.

In truth, LCD screens have a lot to answer for. Thanks to the slender profile they caught on pretty much the moment they became viable, this was however a decade before the tech matured properly: office workers in particular tended to be saddled with monitors that had a very narrow viewing angle and by and large were nowhere near colour-proof.

So while the tech was there since the Noughties to do well-presented HD webcomics, both bandwidth and endpoint limitations

meant there was little point in going with anything beyond simple shapes and primary colours, which led to the internet

basically reinventing the Yellow Kid

phase of daily comix.

In a way this may have been liberating for writer–artists who never figured out pen nibs, but it did stem from a decidedly

cramped communications channel with little room for subtlety, host to metric gigapixels of largely superfluous sprite-stencil comics.

Eventually, the medium became the message. Rather like how 78 RPM gramophones were best suited to doo-wop and opera singers, the 2Knet was a stew of readily identifiable colours, anime with uniform line-weights, and text-only drama desperate to wring some kind of reaction out of people, any kind (because there was no real rapport with the audience).

As it happens, a lack of rapport is also the bane of computer games: how often do you find yourself laughing at an achievement?

The fundamental problem would be that the machine has no Goddamn sense of humour, which makes it difficult to tell wether or

not you get it. And so the goals tend to be defined in terms of whatever happens to be easy to measure — speedrunners are really just doubling down on what computers are (usually) good at, keeping accurate time. Notably, all except glitchless

runs tend to step outside the bounds of what you'd call the regular action of proceeding through the game, to indulge in

walking through walls and so on. It suggests a funny corollary to Caillois' observation that the athlete is not playing but

working: speedrunners are effectively ranked competitive beta-testers who complain about rugpulls when the game gets patched

out from under them.

runs tend to step outside the bounds of what you'd call the regular action of proceeding through the game, to indulge in

walking through walls and so on. It suggests a funny corollary to Caillois' observation that the athlete is not playing but

working: speedrunners are effectively ranked competitive beta-testers who complain about rugpulls when the game gets patched

out from under them.

In the same vein, skill in the traditional sense isn't even what's being rewarded in action games. Where normally we consider the ability to push the envelope to be a natural extension of extraordinary skill, action games are unmistakably paint-by-numbers; there's always a snap-to grid, the only options are fulfilling the requirements faster or else to demolish the barriers altogether. (In truth, the only real difficulty in a computer game is cognitive difficulty, which — quite tragically — is what most punters are desperate to avoid.)

A better word might be production,

since players are always trying to maximize their output of damage-per-second or

simoleons or whatever. The important thing is to produce win states, to optimize production towards six-sigma efficiency, and

of course the pro gamer

is always producing content.

To ensure players are instantly made aware when production has been successful, games have to provide clear feedback: beeping, flashing something in the centre of the screen, some signal that can't be missed. This eventually becomes invisible to players, much as pedestrians usually aren't aware of the sound of their footsteps; however, it has always been one of the easier ways to mock computer games as an immature art form. Not entirely without reason: a sign of maturity is often the ability to take something in and then sit with it, and the action game does not sit; it jumps, sometimes even doubly so.

In artistic terms, the action game is a broken record that wants you to hit the same note over and over and over and over and over and over and over and over and over and over and over and over and over and over and over and over and over and over and over and over and over and over and over and over and over and over and over and over and over and over and over and over and over and over and over and over and over and over and over and over and over and over and over and over, and over, to a degree that would be laughable in any other field.

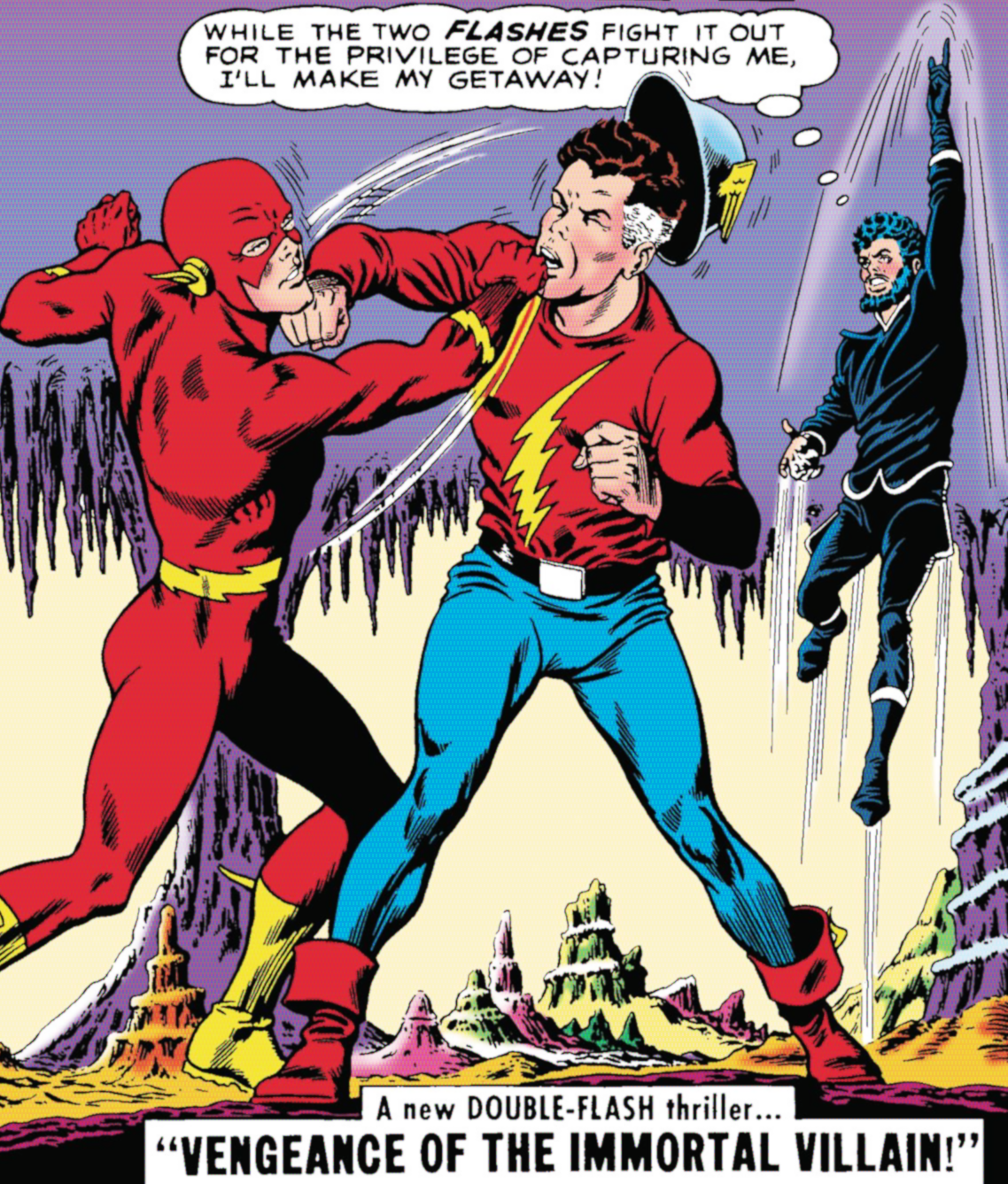

Superhero costumes maximized recognizability across different artists and made it easy to pick out the heroes from the frantic action, which incidentally is the same problem computer games are often trying to solve (tellingly, Green Lantern may have been the odd one out in The DC Universe™ lineup on account of sporting the least lurid colour palette). The blaring crisp signals are like military bugle calls, valued primarily for their clarity rather than their æsthetic impact. Superhero comics, it bears keeping in mind, were once so dominant that some people came to think of bande dessinée in general as an evolutionary dead-end, good for nothing but Sunday funnies and power fantasies. And with games, the situation is even more dire; they can barely manage to pull off comedy.

The era of zero subtlety has also resulted in a slew of shows that fail to stick the landing because they're created by the bathroom singers of deviantArt who devoted their formative years to marinading in the primary colours of emotion (cute/creepy/cringe) to the detriment of all else. Piling accessories onto your OC is an offshoot of this as well: it was the Web 1.0 equivalent of the virtual fashion show, looksmaxing fictional characters being otherwise trivial.

Æsthetes previously excused spending time on art by claiming that it trains our capacity for emotional response (and presumably, the ability to recognize complex emotions in yourself).

When the only possible æsthetics now come down to playground toys

and realistic plus yellow paint,

what room does that leave for advancing towards novel sensations?

Which is not to say that there can be no place for primary colours. But when everyone's trying to be Superman, there's bound to be a spandex shortage.

Purple patches from the silver screen

overdue for the rubbish heap dept.

21 March 2026

Back when the ill-considered field of ludology

was trying to contrive an excuse for not putting down their Nintendo controllers they came up with something called process intensity. Its raison d'être seems to have been to act as an easy way to cordon off all that effeminate storytelling from Real Gaming™ so that the Serious Ludo ologists could get back to the all-important business of watching Superb Mario bounce around in their idiot boxes.

The conceit was based on the vague intuition that cutscenes aren't very game-like,

and when a system is streaming video files off a CD-ROM it's not doing much in the way of novel computing, just shovelling prepackaged bytes into the furnace of a playback plugin.

This may have sounded sensible in the age of rotating optical platters, when only a few titles like Interstate 76 rendered everything in-engine. The renegade interrupt

of Mass Effect and elements bursting free from flashbacks in Cryostasis had yet to blur the lines between interactive running-around and passive consumption of canned storylines—it could only happen once the tech moved beyond pre-rendered cutscenes and slow storage.

In fact there was never much that could be done to salvage the whole edifice: showing that the concept is absurd is a fairly simple task. Consider a convoluted function that returns four times its input.

function quaddmg(sum)

result = sum + sum

result = result + result

return resultThis is more process-intensive

than an optimized version:

function quadruple(sum)

return sum * 4

However, the additional processing just amounts to the computer spinning its wheels, it does not speak to a more game-like

experience. The metric is, if anything, even more useless than body-mass index or measuring programmer productivity by how many lines of code they push to production.

Real codebases often run to several million lines, which makes it an open question exactly how much a given program may be optimized without breaking anything (hence the shift to automated testing in the 21st century; if nothing else, it provides a basic safety net against unexpected side-effects.)

Process intensity

properly belongs to the age before unit testing, when games had to be implemented as hand-tuned C code because it was the only way to squeeze performance out of the hardware. The term was obsolete practically the moment it saw print, as there has been nary a mainstream game created on

bare metal since Rollercoaster Tycoon and Descent.

The real problem with cutscenes, especially pre-rendered ones, is that they do not achieve a graceful integration with the rest of a game, and so players simply shrug it off as something separate, basically an intrusive advertisement for the game designer's wish to be just like a real film director.

Education by arbitration

the mis-edutainment of the youth dept.

15 March 2026

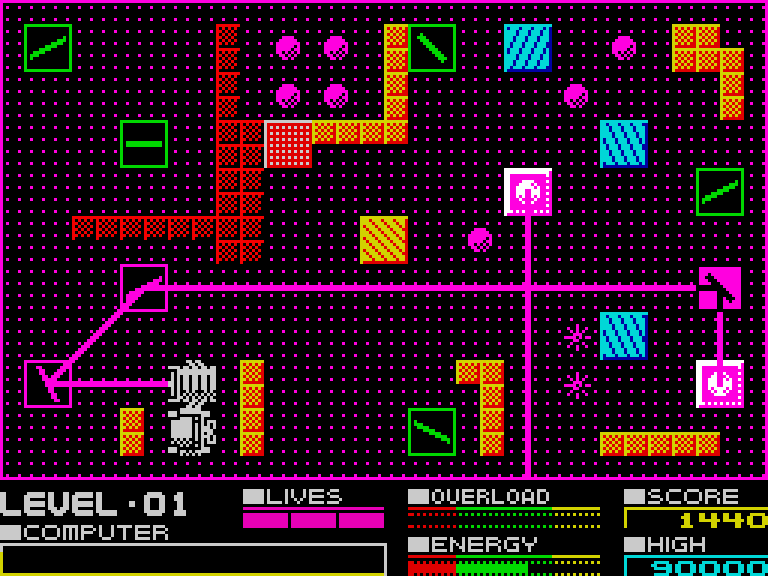

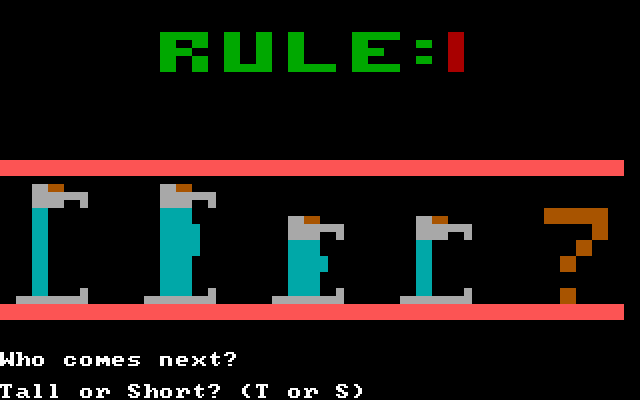

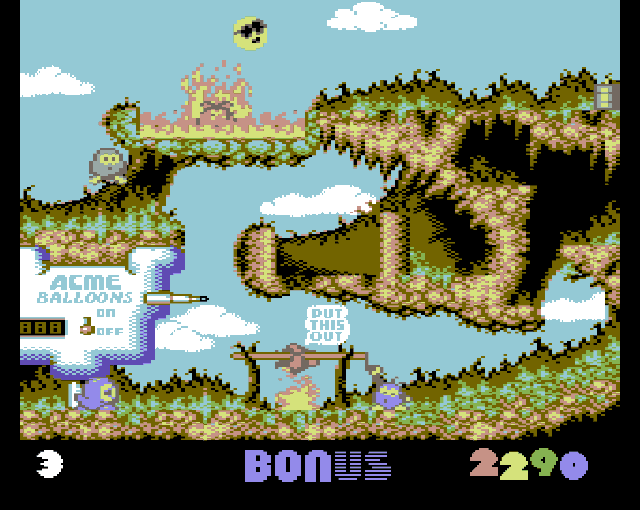

Puzzle games on 8-bit platforms were necessarily abstract due to the low resolutions and cramped palettes. While console games usually centered around jumping, shooting, or driving to cater to broad audiences, games for home computers could be considerably less constrained owing to the level of technical knowledge that had to be assimilated to get off the ground at all — even on the Commodore 64, rattling off a smattering of BASIC was required just to load games from cassette. This selected for players who possessed considerable reserves of patience.

Given that much of the software was produced entirely by one or two programmers, interaction tended to be simple: press a button to change one square at a time, certainly no side-effects reaching beyond the visible frame (not that it was feasible to hold more than one scene in memory at a time; this was the era of flick-screen scrolling for a reason.)

In consequence, 8-bit puzzle games feature some of the most illegible gameplay ever committed to magnetic tape, where you would be hard pressed to predict what was about to happen before you started mashing buttons to see what they did. Small wonder then that there was a trend towards Boulder Dash spinoffs — the format was a good fit for it, boulders slotting into the cubbyholes of Array data structures, and the mechanics almost made sense. This particular style of arbitrary interaction did stick around in so-called educational

software, a sector where it didn't matter wether the content was at all comprehensible because the procurers were clueless parents and Boomer teachers who didn't look twice at the actual flow of the gameplay.

Funnily enough, while the simple rules governing the cellular automata in Conway's "game of life" made it especially suited for 8-bit computers, it took a 16-bit game, Populous, to place it in a broader context in a way that felt natural.

New Year's Predix: Peak QWOP

finger gymnastics & pocket tennis dept.

26 Feb 2026

Around the time that shoulder triggers became curved, game interfaces were increasingly standardized: hardly anyone used the right thumbstick for anything other than nudging the camera, the red button always cancelled, and so on.

Meanwhile, the experience of wrestling with unintuitive controls was shunted to a separate genre, the QWOP Flash game and the awkward-physics simulator; as it happens, this was a good match for the particular brand of millennial humour best summarized as isn't it cute how much we suck?

And that it did.

The genre of gameplay slowly gathered steam, until eventually punters had become sufficiently inured to it that Totally Reliable Delivery Service was included in Game Pass. Now, this should have been the moment of triumph for qwoppers, the point at which they finally broke into the mainstream to become just another genre. That's not what happened, however.

Uninitiated normies looked at TRDS and wondered aloud why it didn't have better controls. I mean, why would you release a game with deliberately poor ergonomics? And here adherents found themselves suddenly under floodlights with their backs to the wall, because they had to explain what the point was.

And really, what was the point?

In Soviet modernism...

Po-mo and slo-mo dept.

18 Dec 2025

B-movies used to be filler shown before the serious

main feature. Then Lucas and Spielberg happened, and the B-movie became the main feature, special-effects budgets competing with the star power of actors. As it happens, this was around the time that the modernist Hitchcock gave up on directing films, and postmodernism became a thing.

Postmodernism vs. modernism was a lot like the USA vs. Soviet Russia. Pomo won because it could get everything on credit, but in the process it turned into a parody of itself. (The observant reader may have noticed that Derrida is Reagan in this metaphor, just rattling off whatever mental fluff crossed the little teleprompter the post-homunculus in his head was holding up.)

Pomo was opposed to grand narratives,

but it turns out it's perfectly possible to follow the little narratives right off a cliff, as well. For example: tariffs on international trade have surprisingly broad grassroots support, presumably because most people haven't the faintest idea how a microchip is made. Just like Derrida, in fact.